Exploring Voice Interface Design

Exploring Voice Interface Design

Exploring Voice Interface Design

Exploring Voice Interface Design

PLEASE NOTE: Since I undertook this project in 2019, AI language models like ChatGPT have radically changed this landscape and so this method of prototyping would need to be updated to take this new technology into account. I still include it as a case study because it showcases my approach to research, innovative prototyping and creative exploration of a new subject matter. If you would like to hire me to work on prototyping voice interfaces please get in touch!

1/5

Intro

This research and prototyping project came about after we identified a skills gap in the design team around voice user interfaces, chat bots and conversation design. Sitting at the intersection of design, language and technology, this subject hugely appealed to my interests, so I was happy to research it and present my findings back to the team. There wasn't a specific commerical objective for the piece but it was a great opportunity to create talking points around cutting edge technologies that could serve as useful conversation points when trying to generate leads for projects with new and existing clients.

2/5

Research & Analysis

In the research piece I explored the history of voice technology from the 1950s up to the present day market of smart assistants like Amazon's Alexa or Apple's Siri. I also explored some of the foundational concepts we need to consider when designing conversations including Paul Grice's Cooperative Principle and some relevant heuristics from Jakob Nielsen's user interface design guidelines. Finally, I discovered a method of testing and refining voice interface scripts (the list of pre-defined responses a voice assistant is provided with in order to deal with the myriad utterances users would throw at it), known as 'Wizard of Oz testing'

3/5

Prototyping Exercise

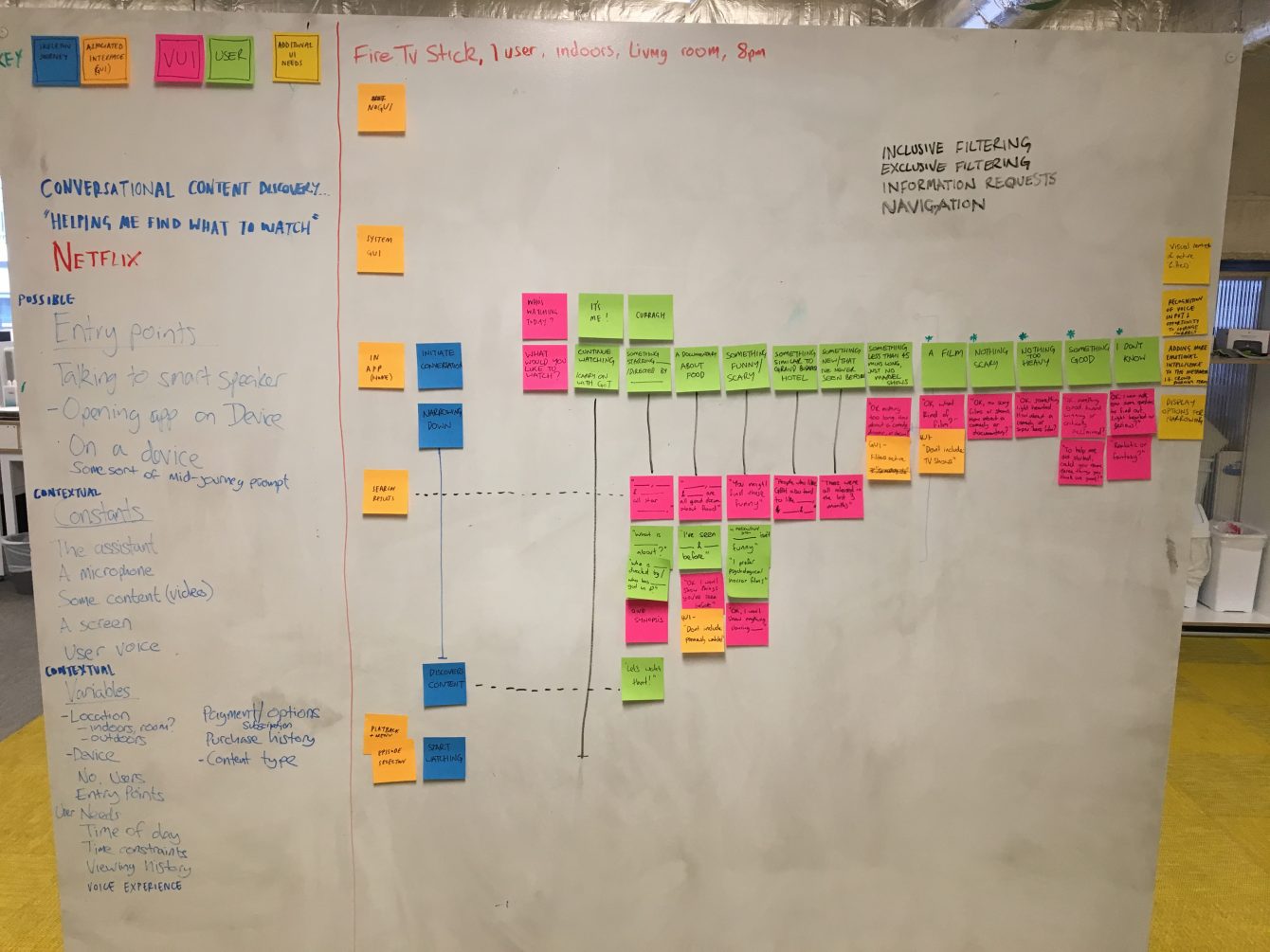

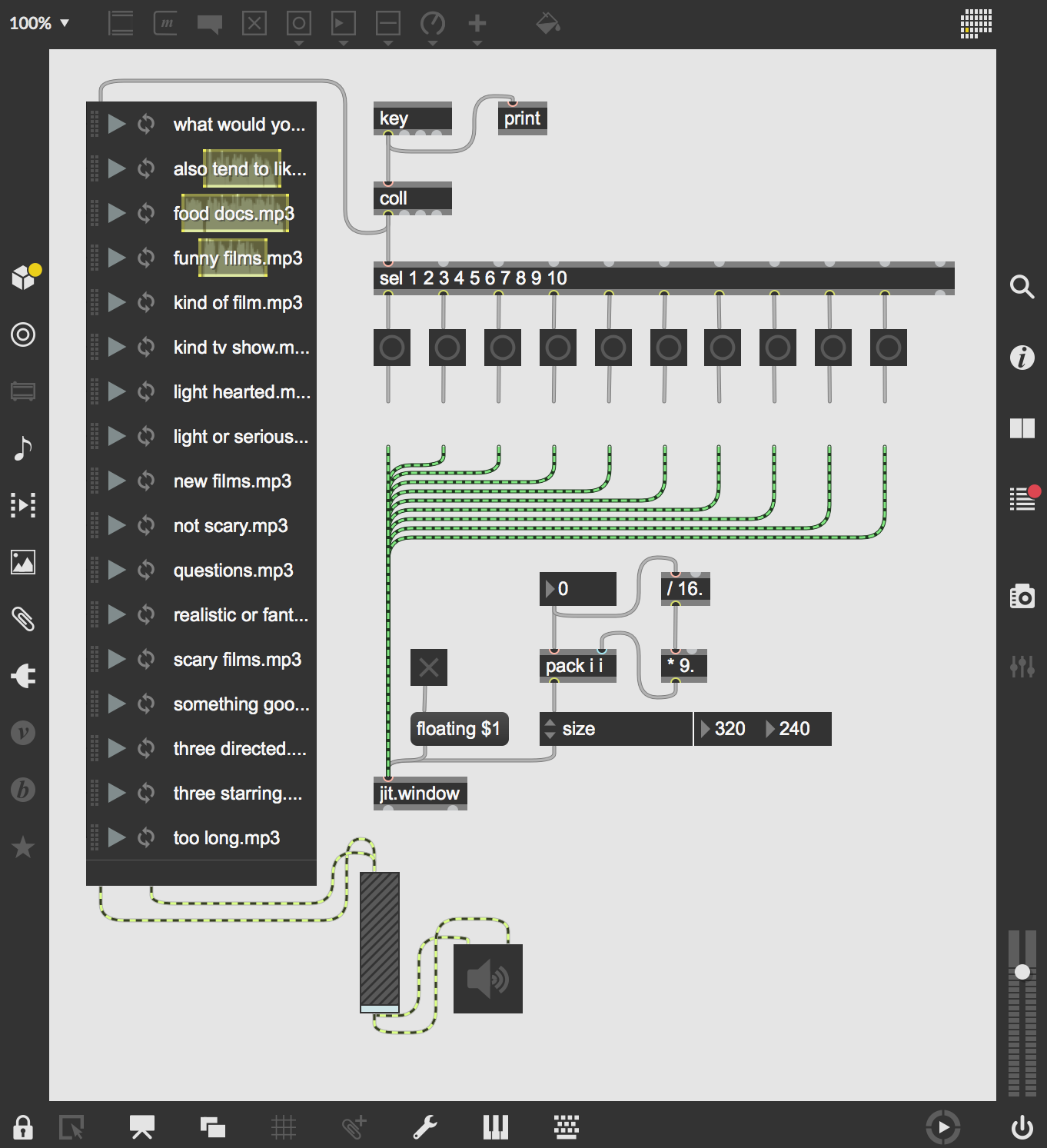

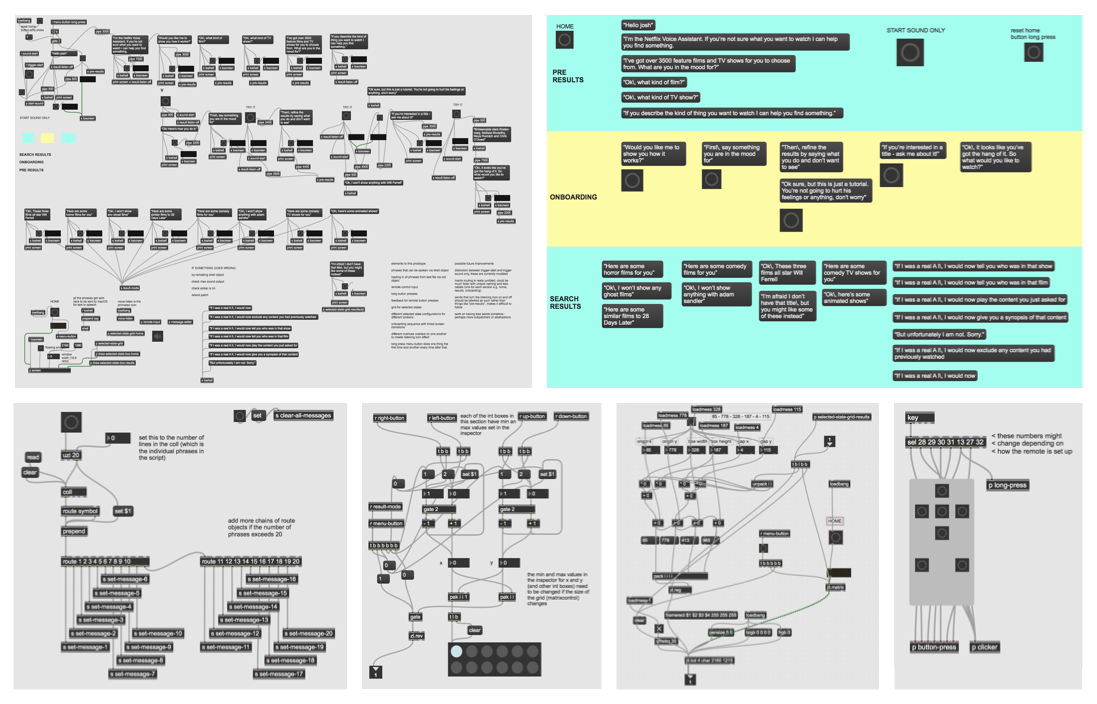

For this exercise I designed, built and tested a prototype that asked the question "what if Netflix came with its own voice assistant?". Using the aforementioned 'Wizard of Oz testing' method (which in this context essentially means turning your computer into a high tech ventriloquist's dummy) as a starting point, I saw an opportunity to construct a prototype that could explore how screens and voice interface elements interact with each other in the context of a smart TV VOD product.

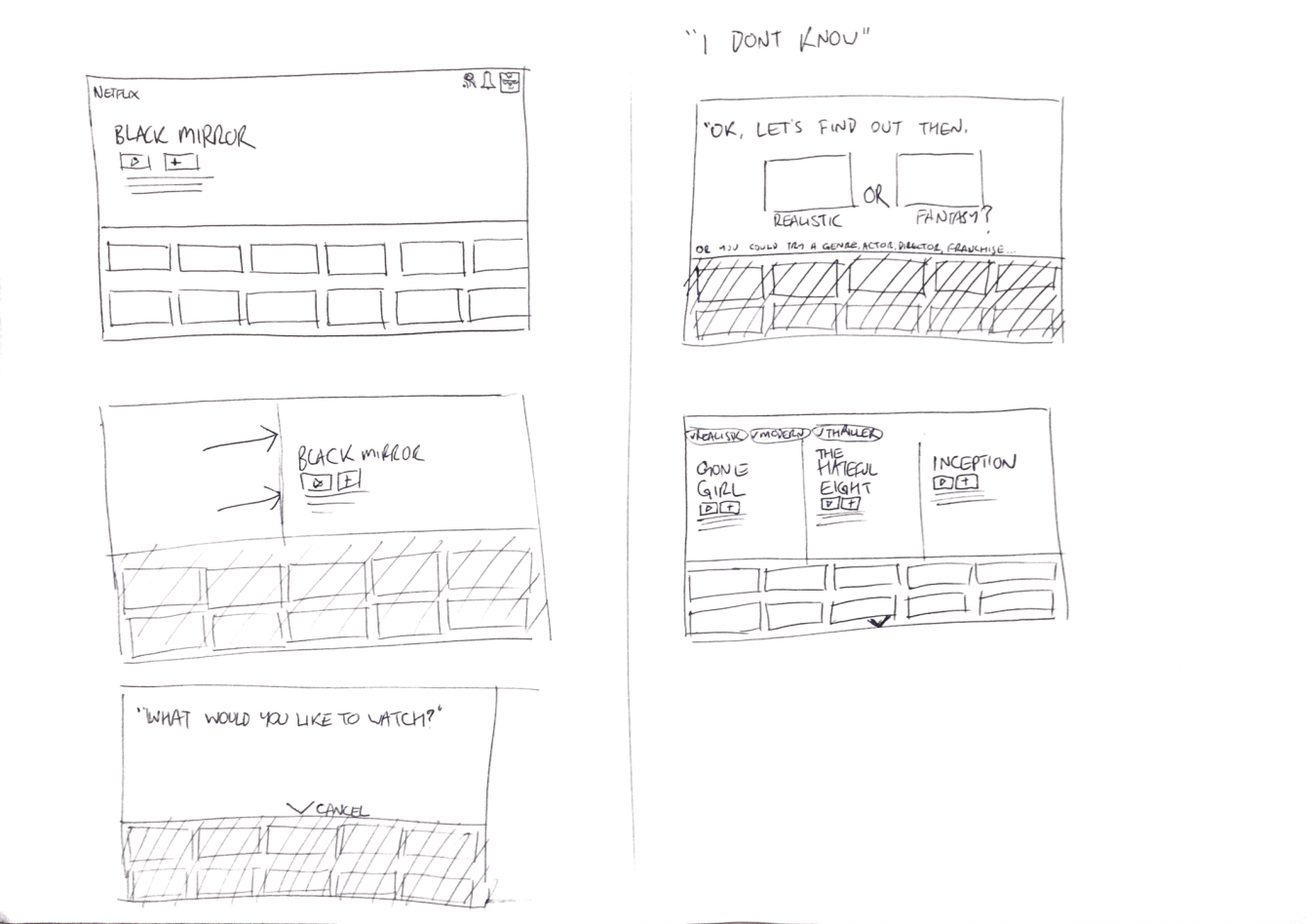

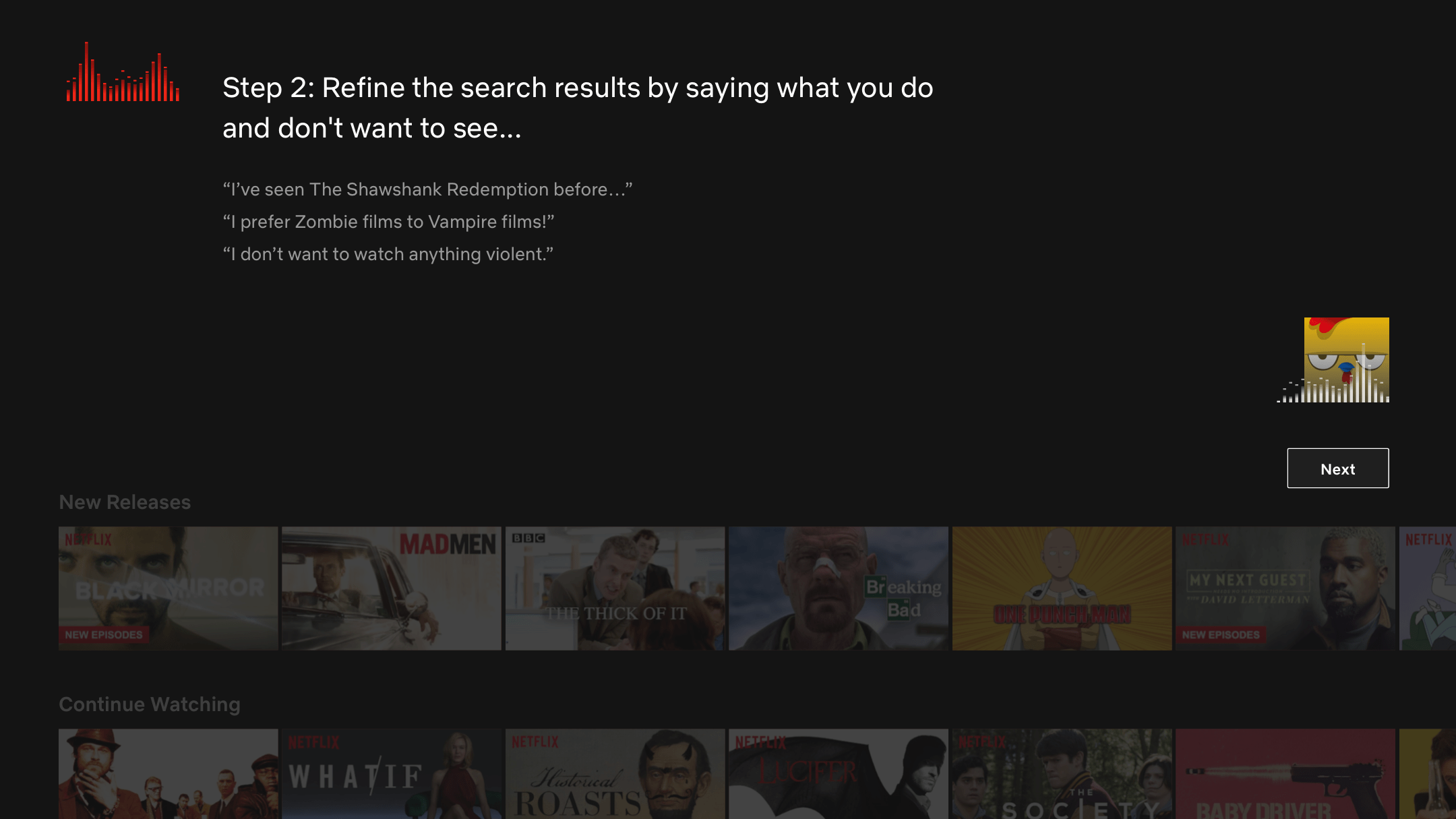

The prototype went through a few different iterations, intially trying to model what the Netflix voice assistant might offer, then settling on trying to teach the user how to interact with it through an onboarding feature. I found that users had little to no expectations of what a feature like this would be capable of and so would need to be shown how to interact with it.

4/5

Results & Learnings

My main takeaway from this piece was that figuring out how visual UI elements interact with a voice interface is an incredibly complex exercise! It had to encompass screen design, conversation design, sound design, audio reactivity and animation in order to build a more complete picture of what was going on at any one time. My first interation of the prototype elicited some good natured bewilderment from my test participant. Later iterations demonstrated a refined onboarding flow for users new to this kind of way of interacting with a familiar product/service.

Gallery

Mapping the conversation script

The inner workings of the first prototype

The inner workings of the first prototype

Sketching screens

User testing the final prototype

Screen from the final prototype

Screen from the final prototype

The fairly complex inner workings of the final prototype!